Belgian diplomatic mail goes straight to NSA, right?

Ever since I worked on mailing lists, newsletters and transactional mails I have a habit of looking at mail server logs.

So did I today after sending a mail to the Belgian Foreign Ministry.

Turns out, my mail first passes through the servers of an US-based conglomerate that owns RSA Security, a company that has been publicly caught implementing backdoors for NSA.

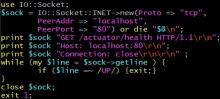

Here's the exim mainlog:

diplobel.fed.be R=dnslookup T=remote_smtp H=primary.emea.email.fireeyecloud.com [3.123.5.10]

X=TLS1.2:ECDHE_RSA_AES_128_GCM_SHA256:128 DN="C=US,ST=California,O=Musarubra US LLC,CN=mx.emea.email.fireeyecloud.com" C="250 2.0.0 3yW4eTU-47539-06g0D5C1032540783A662d84a39 mail accepted for delivery"

Yes, 3.123.5.10 is hosted in Germany, but so what?